Is web data driving the development of Artificial Intelligence

Perhaps the most talked about points in the beyond two year has been Artificial Intelligence (AI). Does it exist? When is it will occur? Can we at any point make a machine with human-like knowledge? In this article, I need to check out some new patterns that show how AI may be coming.

Table of Contents

Does web information drive the advancement of AI?

On the off chance that you have seen a great deal of conversations around Big Data, Machine Learning, and Deep Learning in the beyond couple of months, then, at that point, you are in good company. There should be a basic main impetus for any new innovation or method for comprehension to be created for an enormous scope. This main thrust typically becomes clear or starts turning out to be more obvious as examination progress and patterns become perceptible.

These three terms have become one of the most utilized expressions for depicting acquiring information from information. Huge scope patterns in the size of these datasets are becoming clear, and it has been seen that they keep on developing dramatically. Such substantial datasets permit specialists to apply the speculations on Machine Learning, Deep Learning, and so forth, which have shot up incredibly in notoriety as of late because of their potential use-cases pertinent for such an information.

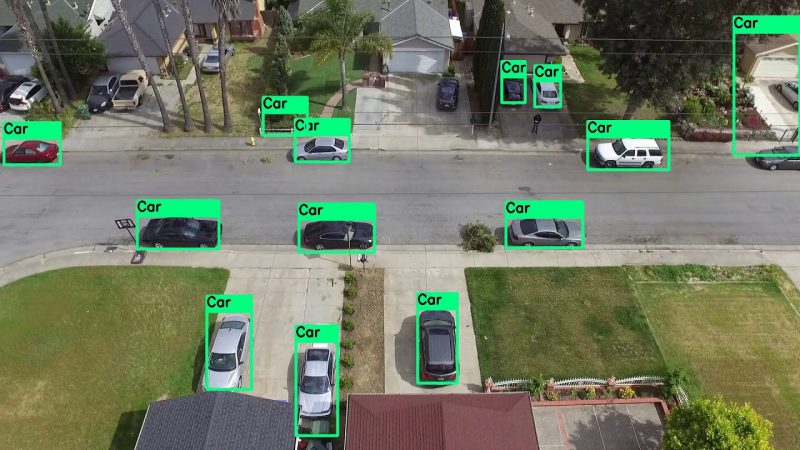

The objective is ordinarily to prepare a “AI calculation” (man-made brainpower) with a lot of web/text/picture/video informational collections and afterward have the option to utilize this AI calculation on new info information (test set). This will be finished via preparing cycles times until the nature of information being anticipated by the AI calculation is inside a specific limit.

To acquire better information from these enormous scope datasets, Machine Learning and Deep Learning calculations that utilization “Solo Learning” have been utilized as of late. This implies that as opposed to preparing machines with models from this present reality (like little cats, individuals moving, and so on), they are prepared on web information (news stories).

This cycle enjoys some reasonable benefits:

1) Unsupervised learning permits us to find stowed away highlights or relations in information with no extra data like names, for instance. It bodes well all things being equal with regards to pictures: we can take care of a calculation large number of feline pictures, and it will actually want to get on some standard highlights that show up in most feline pictures (bristles, mustache, and so forth) or in light of shading conveyance of these pictures, it may even have the option to get the contrast among felines and canines.

2) Unsupervised learning can be utilized with enormous datasets since there are no names included. Thus, it isn’t important to have an enormous number of specialists physically marking the web information. It definitely should be bucketed into recognizable classifications, permitting specialists to find recent fads inside this bucketed information by applying directed AI calculations.

3) Training emphasess of these models can for the most part require days/weeks relying upon how enormous the dataset is being utilized, altogether diminishing the time needed for preparing models before they are applied on test sets. Know more at RemoteDBA.com

What are some new instances of AI?

The utilization cases for this model are interminable! 1) Researchers at Google Brain as of late made a calculation that can “daydream” missing pieces of pictures. Subsequent to preparing the calculation on 30,000 vehicle pictures, it began to fill in non-existing subtleties of these vehicle pictures themselves. This sort of AI could be utilized to work on self-driving vehicles or whatever other application where not pass up subtleties like street signs and so forth Much more thus, on the grounds that ordinarily with Deep Learning models, there is no human mediation needed after the underlying arrangement and preparing emphasess.

The normal human just commits one error for every 5000 words which implies that they get it reasonable around 99.95% of the time, while Facebook’s calculation came to 99.38%. It probably won’t look like much on paper, however this is another record! 2) A new paper distributed by Facebook has shown how their AI could beat people at getting discourse. This is a brilliant achievement for AI examination and shows how far we have come in innovation.

Google Brain has as of late distributed a bunch of instruments called Tensorflow. Tensorflow is a structure that can be utilized for preparing new AI calculations with huge datasets. While a few structures previously existed at that point, TensorFlow permits quicker preparing times and simple incorporation with different libraries/administrations/programming dialects, making it more straightforward to embrace by the overall population. What’s the significance here? It implies that Machine Learning will turn out to be much more open to everybody!

What are some future difficulties for AI?

Availability for preparing huge scope AI models. The vast majority of the structures accessible today require explicit GPUs (Graphics Processing Units) to prepare these models, which can be costly and dial back preparing time essentially. An extra test is the product needed to do this preparation (and with no mistakes!) since it at present requires a broad measure of information as far as coding, working framework information, and so forth This makes it hard for individuals outside the exploration local area or understudies to take on/utilize this sort of innovation since they probably won’t approach exorbitant equipment + mastery required for blunder free exchange learning.

How might we assess the nature of the models/calculations?

This is a perplexing issue to settle since there is no admittance to preparing information in unaided learning. Arrangements incorporate utilizing measurements like perplexity, BLEU, or ROUGE, which attempt to surmised how well the information has been characterized or gathered. All things considered, these measurements may not generally be dependable for explicit assignments.

Security concerns

Calculations prepared by Deep Learning procedures could undoubtedly be hacked in case they are presented to huge enough datasets with perniciously named information (as we saw with the feline versus canine dataset). It would likewise be feasible to hack AI frameworks to produce their code assuming somebody unexpectedly presented them to that sort of information! These hacks are here and there called “Generative Adversarial Networks,” where you have a generator that attempts to create information and a discriminator that attempts to recognize genuine versus counterfeit.

Who is mindful when an AI commits an error?

Should self-driving vehicles be restricted in case they are associated with mishaps, regardless of whether these are not the calculation’s issue? Assuming this is the case, who should assume the fault then, at that point (the individual who made the dataset or the real software engineers?) These are still huge open inquiries that should be tended to eventually.